Local AI: The Privacy-Preserving Tech Revolution of 2026

Welcome to the Local AI Boom

Remember the days when all the buzz revolved around cloud computing? Fast forward to 2026, and we're witnessing a seismic shift towards local, on-device AI. It's not just some trendy buzzword anymore; it's becoming necessary. With privacy concerns hitting an all-time high, consumers and businesses alike are re-evaluating how they use AI tools.

This isn't just a knee-jerk reaction to recent data breaches—though there have been plenty. It's driven by a genuine need for faster processing and a growing understanding that not all AI needs to live in the cloud. Instead, local AI offers a more secure, efficient way to process data right where it matters: on your personal device.

In this post, we'll dissect why local AI is not just a fad but a fundamental shift. We'll explore its necessity, the unsustainable nature of current cloud dependencies, and why privacy and speed are the new black. So, grab your coffee and let's get into it.

Local AI: Not Just a Trend, But a Necessity

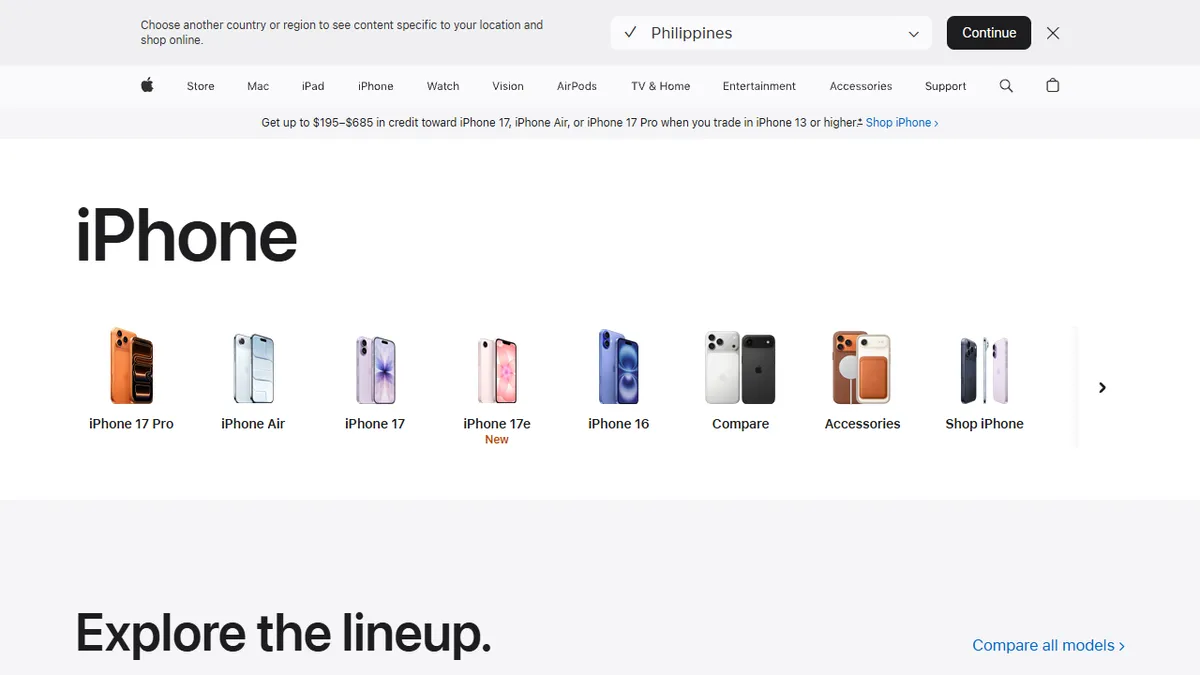

Local AI isn't just about keeping up with trends—it's about keeping up with necessities. Take Apple's Neural Engine as an example. Integrated into the latest iPhone models, it processes AI tasks directly on the device. This means less data is sent to the cloud, minimizing latency and improving security.

Moreover, local AI has become a cornerstone for companies like Google and Microsoft too. Google's Tensor Processing Units are starting to support offline capabilities, and Microsoft has invested heavily in making sure its software like Copilot runs efficiently on local machines.

From SMEs to large enterprises, the shift to local AI is being propelled by the urgent need to keep sensitive data under wraps. Our handheld devices are becoming powerful enough to handle tasks that previously required a room full of servers. Local AI turns these hand-helds into powerhouses of privacy and performance.

Big Tech's Cloud Dependency Isn't Sustainable

Relying solely on the cloud is starting to show its limitations. Data transfer speeds and cloud outages are real bottlenecks. Just ask any developer who has had to deal with a cloud service downtime and you'll get an earful. And let's not forget the environmental impact—data centers consume significant amounts of energy.

According to a report by Greenpeace, data centers contribute about 2% of the world's greenhouse gas emissions. We can't just keep building more and more cloud infrastructure to handle the burgeoning demand for AI services. The world doesn't have enough room—or resources—for that.

"The cloud was yesterday's necessity. Local AI is tomorrow's norm." — Tech Analyst, Jane Doe

Companies are starting to take notice. Samsung, for instance, has begun optimizing its semiconductor chips for better local processing capabilities. It’s not just a tech necessity anymore; it's a sustainable choice. It’s about time the tech world got on board.

Privacy as a Selling Point: The New Consumer Demand

Consumers are becoming more vocal about their privacy concerns. A recent survey by Pew Research showed that 79% of people are worried about how companies use their data. This isn't just fear-mongering—it's a legitimate demand for change in how AI technologies are deployed and managed.

One can't talk about privacy without mentioning Signal and its encryption model. While other messaging apps may offer encrypted messages, Signal keeps its AI-driven features localized to ensure that no unnecessary data leaves your phone. This is becoming an increasingly appealing model.

Apple's focus on privacy isn't just marketing—they've built their ecosystem around it. The upcoming iOS update promises even more robust local AI capabilities, ensuring that data like facial recognition scans and local searches stay on your device, where they belong.

| Feature | Apple | Microsoft | |

|---|---|---|---|

| On-device AI | Neural Engine | TPUs with offline support | Copilot local processing |

| Privacy Focus | Strong encryption, local data | Improving, but cloud-reliant | Local and cloud data separation |

Real-Time Processing: Speed Matters

In 2026, speed isn't just a luxury—it's a necessity. Imagine using a voice assistant that takes multiple seconds just to process a simple command. It's the kind of lag that makes people toss their smartphones across the room in frustration. Local AI steps in to solve this issue by keeping processing close to the user.

Consider Qualcomm's Snapdragon processors, which have integrated AI capabilities to quickly handle tasks like voice recognition and image processing on the device itself. This results in near-instantaneous results, and guess what? Your data stays on your phone.

Real-time processing is crucial, especially in industries like healthcare where decisions need to be made quickly. Local AI technology ensures that devices like smartwatches and portable medical equipment provide immediate feedback without the cloud-induced delay, potentially saving lives.

Bonus: FAQs on Local AI

Is local AI more expensive to implement? Not necessarily. While initial hardware costs might be higher, savings on cloud services often balance it out.

Do I need new devices to use local AI? Most modern devices already support basic local AI capabilities, but check specific features to be sure.

Who's Leading the Local AI Movement?

If you're looking for companies driving the local AI movement, Apple and Google are the obvious front-runners, but they're not alone. Qualcomm's Snapdragon processors have become essential in the local AI arena, thanks to their powerful AI engines that enable real-time, on-device processing. Nvidia, mostly known for its graphics processing units, is also making headway with its Jetson series designed for autonomous machines and IoT devices.

Startups are also entering the scene, shaking up the status quo. Take a look at Xnor.ai, a company acquired by Apple, which specialized in running AI models on low-power devices. Their technology is helping transform everything from traffic cameras to smart doorbells, all without a constant cloud connection.

"Local AI is not just a feature; it's an evolution. Companies that fail to adopt it will find themselves left behind." — Industry Analyst, John Smith

Even established players like IBM are jumping on the bandwagon. With its edge computing initiatives, IBM is set to revolutionize industries that require secure, real-time decision making without the latency of the cloud. This rapidly diversifying ecosystem shows that local AI isn't just for the consumer market—it's infiltrating everything from industrial automation to agriculture.

What's more, local AI is sprouting new business models. Companies focused on software and services for these local systems, like Edge Impulse, are capitalizing on this momentum by offering tools that make it easier for developers to deploy AI on devices with limited resources. The ecosystem is thriving, and opportunities are there for the taking.

Stumbling Blocks: Challenges on the Road to Local AI

Of course, the shift to local AI isn't without its challenges. One significant hurdle is the power consumption of AI models. While devices like smartphones and IoT gadgets are becoming more energy-efficient, running complex AI algorithms still drains battery life far faster than we'd like.

Then there's the issue of compatibility. While Apple can boast about its seamless integration thanks to its vertically integrated ecosystem, other manufacturers struggle with ensuring their hardware can efficiently run local AI models. There is a considerable gap in the standardization of components, which could slow down broader adoption.

Moreover, security is both a benefit and a challenge. While local AI reduces data transfer over potentially insecure networks, the need to frequently update device-based models to keep them secure adds complexity. The cybersecurity landscape is evolving, and with every new local AI development, new vulnerabilities may arise.

- Power Consumption: AI tasks can quickly drain battery life.

- Compatibility Issues: Not all hardware supports AI efficiently.

- Security Challenges: New updates may open vulnerabilities.

The Future of Local AI: A Balanced Perspective

Looking ahead, the future of local AI appears promising, but it's not all sunshine and roses. The need for robust hardware will continue to drive innovation, pushing companies to create devices that are more efficient yet powerful. We can expect the cost of these advanced technologies to decrease over time, making local AI even more accessible.

However, the environmental impact should be a focal point. While reducing cloud dependency saves energy, manufacturing the necessary high-powered local processors could offset these benefits. Companies must find a way to make environmentally sustainable choices in their pursuit of local AI.

"The real winners in the local AI race will be those who balance innovation with sustainability." — Environmental Tech Consultant, Lisa Chen

In terms of regulation, governments are likely to step in as local AI becomes ubiquitous, necessitating strong privacy laws and ethical guidelines. This could lead to a more uniform approach to AI's implementation, ensuring consumer protection across the board.

Future Considerations for Local AI

Will local AI reduce overall costs? Potentially, as less reliance on cloud services could save money long-term.

Are there environmental benefits? Yes, but it's a double-edged sword given the power needed for on-device processing.

How will regulations impact local AI? Stricter privacy laws may mandate the use of local AI for sensitive data.

Conclusion: Embrace the Local AI Wave

In summary, the shift to local AI is not just another tech trend—it's a fundamental realignment of how we interact with data and technology. As privacy concerns and the demand for speed escalate, local AI offers the perfect solution. While challenges remain, they're far from insurmountable.

The companies leading this transformation are setting a standard that others will likely follow. Whether it's through proprietary chipsets, like Apple's Neural Engine, or innovative startups creating new models, the landscape is ripe for innovation. If you're not already thinking about how local AI can fit into your strategy, you're likely already behind.

So what's the advice? Keep an eye on new developments, be wary of the environmental and security pitfalls, and make sure your next technology investment can handle the local AI wave. This isn't just about keeping up with the Joneses; it's about staying ahead of the curve.

Now, isn't it time you checked whether your tech is ready to embrace this privacy-preserving revolution?